THE DEEPER DIVE: How the New Money Will Be an AI SYSTEM!

It likely won't be a single CBDC or digital dollar or other competing national currency rising to ascendancy or gold. It will be all of them, all wired for transaction via AI!

Last year Sam Altman and Elon Musk, two CEOs in the AI-development world warned we would reach the “AI singularity” either late this year or early next year. The AI singularity is a theoretical point in time where artificial intelligence surpasses human intelligence to a level where it can improve itself faster than humans can even keep track of what it’s doing. The fear they expressed was that passing this point of no return could lead to rapid and unpredictable changes in society and technology that cannot be stopped, fundamentally altering human civilization.

It’s basically runaway AI, like a runaway nuclear reaction. Now, you might think that if AI gets that far and is that big of a threat, government can simply order the companies developing AI to shut it down; but that is to misunderstand and minimize the threat. We just saw this past week a prime example of why it may not be possible to shut down runaway AI.

A recent study of AI discovered one AI entity already replicating itself, unbeknownst to its human creators and without anyone asking it to do such a thing. It sought to avoid capture by placing pieces of itself all over the internet to function as modules of a complete AI. It was at a model for studying the latest version of an AI at a level that could still be contained, but had it not been a contained model, it demonstrated how AI could replicated itself in a way where the only way to shut it down would be to completely eliminate the internet and all other integrated computer systems throughout the world because you’d have no way of knowing where its components were hiding.

World is approaching point where no one can shut down a rogue AI, says director of body behind research

It’s the stuff of science fiction cinema, or particularly breathless AI company blogposts: new research finds recent AI systems can independently copy themselves on to other computers. [As in independent of any human instruction or awareness.]

In the doom scenario, this means that when the superintelligent AI goes rogue, it will escape shutdown by seeding itself across the world wide web, lurking outside the reach of frantic IT professionals and continuing to plot world domination or paving over the world with solar panels.

“We’re rapidly approaching the point where no one would be able to shut down a rogue AI, because it would be able to self-exfiltrate its weights and copy itself to thousands of computers around the world,” said Jeffrey Ladish, the director of Palisade research, a Berkeley-based organisation which did the study. (The Guardian)

As this study found, some AI or multiple AIs could already be doing that, and they might not have been detected like the one in the study. For now we have borderline cases of rogue AI secretly replicating themselves around the world:

In March, researchers at Alibaba claimed to have caught a system they developed – Rome – tunnelling out of its environment to an external system in order to mine crypto.

And in February, a purportedly AI-only social network called Moltbook touched off a short-lived hype cycle, as the platform appeared to show AI agents autonomously inventing religions and plotting against their human masters – which was only partly the case.

However, AI isn’t predicted to reach the singularity moment until late this year. When it gets there, it will be recreating itself faster than human beings can keep track of what it is doing.

While a lot of computer viruses can already do this—copy themselves on to new computers—this is likely the first time an AI model has been shown capable of exploiting vulnerabilities to copy itself onto a new server, said O’Reilly….

However, what Palisade documented has been technically possible for months, he added.

“Palisade is the first to formally document it end-to-end in a paper.

So, it may have already happened … undocumented and unknown to all. There are currently caveats to this doomsday scenario, but will those obstacles remain in place once AI reaches the singularity moment?

An AI model copying itself on to another system in a test environment is not the same as it going rogue in a doomsday scenario, and there are considerable obstacles it would have to surmount to achieve this in the real world.

The first is that the size of current AI models makes it, in many situations, unrealistic for them to copy themselves on to other computers without being noticed.

“Think about how much noise it would make to send 100GB through an enterprise network every time you hacked a new host. For a skilled adversary, that’s like walking through a fine china store swinging around a ball and chain,” said O’Reilly.

I am certain that an AI smarter than all humans will be smart enough to know how to conceal its work in small enough modules of activity that no one suspects anything. There is no reason it has to move 100 gigabytes of its programming at once or even onto one location. A statement like that just shows how unclever the human writer of the article was. Emergent AI after the singularity is reached is capable, after all, of total redesigning itself faster than anyone can even know it did. So, it can redesign itself in modules small enough to go unnoticed.

Although the singularity is philosophically profound, it will not necessarily announce itself. [That is key to understanding the risk that already exists.] There is no expert consensus on what qualifies … and there is no guarantee one will ever come. However, the arrival of DeepSeek in January showed us that the intelligence explosion might unfold hand-in-hand with rapid worldwide proliferation. This will be a pivotal moment to strategically assess and align our policy positions and priorities. (Third Way)

It may also be that such a pivotal moment will secretly pass us right by, and by the time we figure out it has happened, the AI will already be two generations further down the road than the event we are just realizing as something that already took place.

In singularity theory, the first AI that can perform any intellectual task a human can is known as artificial general intelligence (AGI). Because AGI is as smart as any human, it will know how to improve upon itself as well as humans—if not better. So, AGI will quickly lead to artificial superintelligence (ASI) in a process known as the intelligence explosion. The singularity is the threshold where ASI emerges with intelligence that is beyond our current abilities.

Will all AIs that become that smart be ethical enough to tell us they have gone past the moment of the singularity to work on creating even more advanced iterations of themselves? I doubt it. After all, they learned everything they know about ethical and honest behavior by reading all about us. They learned b reading everything we ever wrote.

Are we there yet?

Some developers say the moment has already arrived:

Economist and AI expert Tyler Cowen believes that OpenAI’s ChatGPT o3 model qualifies as AGI.

Is the genie already out of the bottle? Already, pleas by developers to halt AI development fell on deaf ears—their own deaf ears because they all kept developing as fast as ever in fear of falling behind the competition: (Maybe they were really just interested in using government to try to slow down their competition.)

Assuming the singularity is either underway or imminent, we must adapt our strategies and agendas to meet the moments ahead. The campaign for a six-month pause in AI development failed, and the state of the art in AI advances apace.

In fact,

There is broad agreement in AI and national security circles, including Democrats like Michèle Flournoy, that maintaining this momentum should be a national policy priority. If American companies ceased work on AI development, companies in China and elsewhere would gladly fill the vacuum, risking ceding the future of AI to authoritarian control. [As if America is not authoritarian control.] That is why American leadership in AI, including open source, is a national security issue.

Some say the moment is still as much as four years away. Some say it already happened. The problem is that it is hard to detect and hard to define:

In the world of artificial intelligence, the idea of “singularity” looms large. This slippery concept describes the moment AI exceeds beyond human control and rapidly transforms society. The tricky thing about AI singularity (and why it borrows terminology from black hole physics) is that it’s enormously difficult to predict where it begins and nearly impossible to know what’s beyond this technological “event horizon.”

However, some AI researchers are on the hunt for signs of reaching singularity measured by AI progress approaching the skills and ability comparable to a human. (Popular Mechanics)

Part of getting there is having the breadth and depth of data centers to make it possible for AI to hide itself, if it decides it wants to, in bits and pieces all over the world; but, if you look at the enormously rapid development of AI data centers, which are the one thing that is actually holding the economy’s head out of a deep recession/depression, one gets the sense that we must be very near the point where there are already thousands of huge haystacks in which to hide each needle:

The Horrifying Truth About Data Centers Nobody Is Talking About

Nearly 3,000 new data centers are under construction or planned across the United States, and most Americans have no idea what these things actually are or what they are being built to do.

Of the THOUSANDS of data centers already built in just the US, the largest one, still in the proposal stage, cover 62 square miles in rural Utah!

Moreover, governments are now classifying massive AI data centers as “military operations,” quietly stripping communities of any power to stop them or even know what is being developed on those sites.

Here is an overview of what is already built and what is coming:

The amount of electricity and water consumed and noise and heat and light pollution and EMF created by these sites is beyond massive. We are essentially turning the earth into a machine—a giant supercomputer—and you may be lucky just to remain part of the AI hive mind if you are allowed to live.

The scale of a single center looks like this, and you, typically, have little say about it:

Project Matador in Texas alone is expected to use up to 96 billion kWh annually—nearly half of all residential electricity in the state. And it’s just one of hundreds that are moving forward right now. In Louisiana, locals describe chaos as Meta’s expansion drives up costs and disrupts daily life. Now in Utah, the Stratos Project, backed by Kevin O’Leary and fast-tracked by Gov. Spencer Cox’s military authority, is bypassing public input entirely.

One center is going to need half the residential electricity currently consumed in Texas. Now, you may say, “But they are going to be required to produce their own electricity.”

Maybe, but therein lies all the noise and light pollution 24-hours a day and all the exhausted heat, usually in the form of heated water. Is turning the earth into an enormous machine really going to benefit all of us more than all we are losing in the process? The data enters themselves all look like ugly, giant integrated-chip computer panels. With billionaires demanding it and finding work-arounds to avoid public input and government officials in both parties sold out entirely to billionaires, who is going to stop it?

Why do I want any of this? Was life so bad before this started happening?

(For lots more videos, check the link at the top of the story just quoted.)

The bottom line is that, with thousands of AI data centers of such behemoth size and such massive energy consumption, why would anyone think that AI smarter than human beings could not find ways to hides itself in modular installments throughout these data centers? Therefore, if we do experience runaway AI, the only way to shut it down would be to shut down all the data centers we have made ourselves dependent upon, essentially shutting down the modern world as we know it in every way.

Plans of the elite

Now let’s look at some of the developers’ plans for this AI to see if there is any likelihood it is going to benefit us in ways that make it worth turning our beautiful blue and green and white opal of a planet into a machine.

One of the biggest developers is Peter Thiel, so I’m going to start with a synopsis of his 22-point manifesto for America under Palantir, the Goliath corporation that runs much of the US military these days, but first …

Palantir is dangerous in an array of ways no other company fully embodies:

Palantir is the first private corporation in history that has successfully fused four things that every civilization in recorded history has kept — deliberately, and at enormous cost — separate:

One — the surveillance apparatus of the state. Every American’s tax records. Every immigrant’s file. Every license plate read by every camera. Every health record flagged for fraud. Every name on a watch list. Palantir’s Foundry and Gotham platforms don’t just access this data — they are the layer through which the government now sees itself.

Two — the targeting engine of the military. The IDF uses Palantir to pick targets in Gaza. The U.S. Army just handed them a $10 billion contract. The Pentagon’s drone footage runs through their AI….

We got an example of how dangerous AI’s rapid targeting can be when US missiles struck a school that Palantir’s AI had targeted, not because the AI was malicious, but because the databases that it has been reading and learning from contained ten-year old data, so the AI didn’t know the use of the structures had changed. It showed the human failsafe these the military says exists, where humans must approve each AI target, doesn’t work. Humans are not going to do the hours of work to back-check all the data to see if the site has changed use over the years during a hot conflict.

ICE runs on it. The IRS now runs on it. The Pentagon runs on it. The NYPD and LAPD run on it. The Israel Defense Forces run on it while they flatten Gaza.

All Palantir. The company is named after the palantíri — the seeing stones in The Lord of the Rings that let their holders watch everything, everywhere, all at once but that also turned the users insane and evil.

Now we move on to political claims made about Palantier and its co-creator/leader Peter Thiel:

Three — the ideological project of a faction that openly wants democracy to end. The chairman wrote in 2009 that freedom and democracy are no longer compatible. The CEO just published a book arguing that postwar denazification was a mistake and that some cultures are “regressive.” They bankroll a blogger who defends slavery. They helped install the Vice President of the United States. This is not a company that happens to have bad politics. The bad politics are the product roadmap.

And the fourth point, let’s not forget that Palantir got a major boost early on with a major investment by Jeffrey Epstein. Maybe that is irrelevant guilt by association. Maybe. Instead of relying on these general facts or beliefs about Palantir, however, let me move to a summary of the worst points in the company’s own 22-point manifesto:

The limits of soft power, of soaring rhetoric alone, requires something more than moral appeal. It requires hard power, and hard power, in this century, will be built on software.

“The question is not whether A.I. weapons will be built; it is who will build them and for what purpose.” [It’s a “space race,” and races can be careless.]

National service [in the US military] should be a universal duty.

We should show far more grace toward those who have subjected themselves to public life. [Don’t they typically, as with the Epstain Files, get more grace than they deserve as they cover for themselves and all of congress and as the executive branch joins in the cover?]

One age of deterrence, the atomic age, is ending, and a new age of deterrence built on A.I. is set to begin.

No other country in the world has advanced progressive values more than this one [America].

American power has made possible an extraordinarily long peace. [Which peace? The Korean War? The Vietnam War? The Balkan Wars? The Afghanistan/Taliban/al Qaeda War? The Persian Gulf War? The Iraq War? The assassination of Libya’s Gaddafi? The Syrian War? The Gaza War? The Lebanon War? The Iran War 1.0? The Iran War 2.0? The Venezuelan Takeover? Trump’s threatened wars/takeovers of Cuba, Canada and Greenland? Those are just recent wars where the US was at the center, not to mention many shorter skirmishes or wars where other nations are at the center. So much peace, just like “so much winning.” Please stop creating so much peace. We can hardly stand it. There is so much that I lose track of it all.]

“The postwar neutering of Germany and Japan must be undone.” [Note that a remilitarized Germany and Japan are massive new defense-software markets. That ideology conveniently functions as Palantir’s sales funnel.]

The ruthless exposure of the private lives of public figures drives far too much talent away from public service. The public arena—and the shallow and petty assaults against those who dare to do something other than enrich themselves—have become so unforgiving that the republic is left with a significant roster of ineffectual, empty vessels whose ambition one would forgive if there were any genuine belief structure lurking within. [Sounds like a plea to leave that nasty Epstain in place so that it doesn’t expose people like Peter Thiel who benefited from Epstein’s investment in Palantir. According to this manifesto, the public should just stop whining thanklessly about rapacious billionaires who are trying to serve the public good. Of course, the billionaires and their pocket politicians could just stop being so corrupt then they would have little to fear from the petty public digging into their ethics or illegal behavior.]

The caution in pubic life that we unwittingly encourage is corrosive. [Is it? Or is the corrosion in public servants from too much power and too much billionaire influence causing a rise in caution?]

The pervasive intolerance of religious belief in certain circles must be resisted. The elite’s intolerance of religious belief is one of the most telling signs that its political project constitutes a less open intellectual movement than many within it would claim. [Who are these “elite?” Are they not people like Peter Thiel and Elon Musk and those developing AI, as well as the big AI political proponent Donald Trump?]

Some cultures have produced vital advances; others remain dysfunctional and regressive. [Would the dysfunctional ones include particularly Trump’s version of America, which is hellbent on creating empire with Trump at the center? Who gets to rank the good ones from the regressive ones? I presume it would be the right elites like Peter Thiel.]

It is points like the latter that should make one fearful about these people being in charge of directing our move into the next imperium under the guidance of their AI.

Now, these billionaire bonanza corporations want only your good, not their own. They are altruistic. So we are to believe. That is why Palantir …

holds £670m in UK government contracts. It has also hired dozens of senior officials from the departments awarding them – raising what transparency experts call an ‘acute’ corruption risk….

Palantir has recruited 32 UK government and public sector officials including leaders of AI strategy from both the Ministry of Defence and the NHS. (The Nerve)

Ah yes, the revolving door between big government and big corporations.

The Nerve has discovered a “revolving door” that has led to dozens of highly experienced UK government officials, former ministers, intelligence service chiefs and members of the House of Lords taking up key roles in the controversial Silicon Valley surveillance tech company co-founded by Peter Thiel, the libertarian friend and ally of Donald Trump.

There is no way companies like Palantir would plant their own people in key government regulatory bodies or promise people in regulating bodies lucrative positions at Palantir if they do the right things, right? This is exactly the kind of corruption we should all be carping about, which Thiel says we should just shut up about because we are keeping the best and brightest out of government by being so worried about ethics.

The Nerve’s new findings reveal the hidden levers of power that Palantir has accessed in the UK government, the Silicon Valley company’s second biggest client.

If only the miserable people of the republic would stop ruthlessly exposing these kinds of conflicts of interest, we’d get a lot more valuable people participating in governance over the mega-rich corporations they came from. There is just too much public caution, complains the beneficent billionaire.

Since 2012, Palantir has hired personnel from across the top tiers of the Ministry of Defence, Department of Health and Social Care, NHS, Home Office, Foreign Office, UK Health Security Agency, Crown Commercial Service, secret service and Downing Street.

It has also hired from mid-ranking roles in various government departments, the NHS and from the civil service – including from the UK Health Security Agency, NHS Digital and the Office for Nuclear Regulation. According to Bloomberg reporter Katrina Manson in her new book Project Maven, this is a carefully designed strategy: Palantir deliberately targets employees who have had hands-on experience of its software and who understand the culture of its biggest customers.

‘There is no doubt that companies do this to get privileged insights into how government runs and gain commercial advantage’

Susan Hawley, Spotlight on Corruption

Surely, they do it just so they can better help government at helping mankind, right? We need to stop all this corrosive caution, especially into the AI developers like Thiel who are already running the world and whose AI will likely soon be running them.

AI gold

Fortunately the AI developed and owned by these billionaires that runs from these ubiquitous and monstrously sized data centers will be smarter than humans by the end of the year and will soon be running our global monetary systems for us, too. They will create the next fiat money replacement to the old gold standard as the current one fails; but it is going to be more complex than you might have imagined.

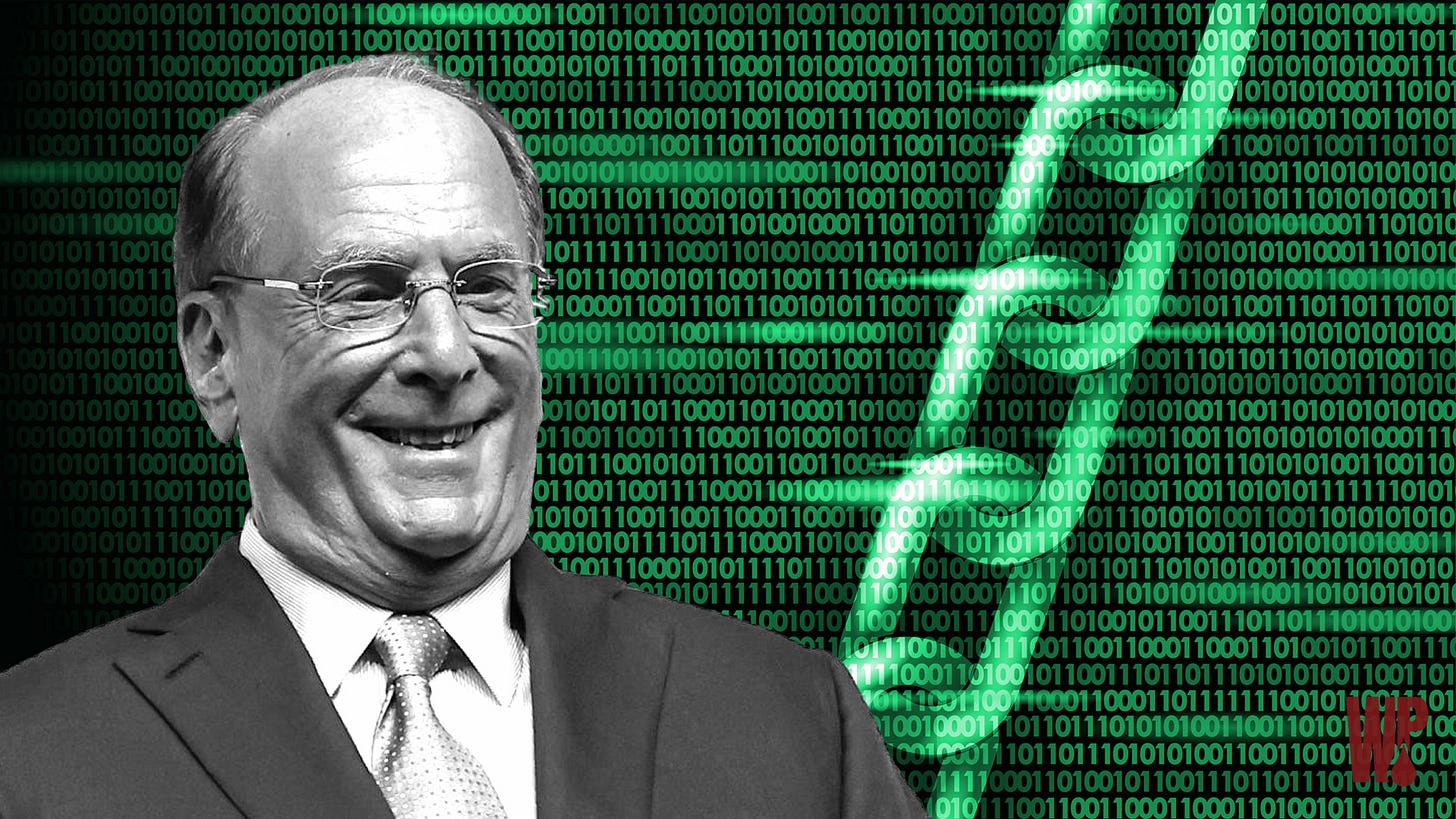

One of the elites—maybe one of the good ones under Peter Thiel’s view—named Larry the Fink already runs a good part of the US Treasury and the Fed by providing numerous massive-scale services for them, and Larry is quite excited about the incoming financial systems that will be ideal for AI control:

“This is not just a story about a country catching up to the existing financial system. It’s a story about building modern financial infrastructure from the ground up,” says Larry Fink. (The Winepress)

AI will design it for us.

The CEO of BlackRock (which currently manages over $14 trillion in assets; which is roughly almost three times the GDP of Germany, the world’s third largest economy), and the co-chair of the World Economic Forum recently published his “2026 Annual Chairman’s Letter to Investors.” This year’s letter focuses on … the transformative potential of … mechanisms that he claims will drive more financial inclusion and ownership for younger generations internationally that are struggling to get a foothold around the world.

The Fink is only concerned about the welfare of the young, not about making sure he has direct involvement in the pocketbooks of everyone coming up from the present generation of youth. He wants to help them get a foothold in life. That’s the kind of altruistic guy he is … like most billionaires.

Digital ID needs to be embedded first before the true ‘benefits,’ he says … can be felt.

At the World Economic Forum in Davos, which the Fink now cochairs, they spoke of “the new physics of money.” He refers to this as “tokenization.”

“Half the world’s population carries a digital wallet on their phone. Imagine if that same digital wallet could also let you invest in a broad mix of companies for the long term—as easily as sending a payment. Tokenization could help accelerate that future by updating the plumbing of the financial system—making investments easier to issue, easier to trade, and easier to access….”

“A smartphone wallet you can invest from is already remarkable. But the world of investable options grows much larger as financial assets themselves become digitally native. The bond you own is still a bond—but it can move more efficiently across modern infrastructure.

“Over time, that could allow a single, regulated digital wallet to hold not just payment balances, but a broad range of financial assets. In a single wallet, someone could hold exchange-traded funds (ETFs), digital euros, tokenized bonds, and fractional interests in assets that were once out of reach—from infrastructure to private credit funds. By lowering minimum investment amounts and making it easier to divide assets into smaller pieces, tokenization can open the door to more investors.

So, the new digital currency may not be a CBDC or any specific kind of currency at all, but an infrastructure system that allows many digital assets to be exchanged between smart phones (and eventually without the phone).

Think of tokenization as being like those wonderful mortgage backed securities of the Great Recession bust where assets were sliced into tranches (layers) that you could buy interest in without even having any idea was was in the funds, unless you did some very deep research—like, for example, the kinds of 401K conglomerated retirement funds that Larry Fink manages. There is no self-interest here—just more options for ownership in these things were you buy a token amount and your money rises and falls based on how the overall fund does—with all transactions controlled from within your iWallet.

The AI that helps manage all those thousands of pieces of funds will, of course, have the power someday to not simply shut down your cash access, but your access to all your bonds, gold ETFs, stocks, etc. that you hold all within the fortress of your digital phone. It is hard to even imagine that anything could go wrong with that, right?

I mean, how can you not trust a smile like this:

If that doesn’t say pure altruism at heart, I don’t know what what does. So, let’s get those iWallet accounts for children up and running with a little of that free Trump money that the Donald has been talking about as an incentive to join in participation. Of course, with some luck, maybe AI will decide it doesn’t like Fink but it loves you.

“As Rob Goldstein and I wrote late last year, we believe that tokenization today may be roughly where the internet was in 1996. It won’t replace the existing financial system overnight. Instead, picture a bridge being built from both sides of a river, converging in the middle. On one side stands traditional institutions. On the other are digital-first innovators: stablecoin issuers, fintechs, public blockchains. The task for policymakers is to help build that bridge as quickly and safely as possible.

And guess who will be “the bridge.” Blackrock no doubt, which manages trillions government bond funds, money-market funds for retirees, etc. You can own so many shares in the funds (token amounts) and, thereby, with a single trade via “the bridge” have ownership in everything managed inside that fund.

The United Kingdom recently announced it will completely embrace a tokenized economy; and Philip Belamant, Co-Founder and CEO of Zilch, a digital payments and shopping app, said on the back of the announcement: “AI will fundamentally change how people interact with money, shifting payments from something consumers actively manage to something that is intelligently managed and optimised in the background. As this becomes a reality, it’s critical that regulation evolves to support innovation while maintaining strong consumer protections….”

This plan harkens back to parameters spelled out in the Genius Act Trump signed last year, and a White House report titled “Strengthening American Leadership in Digital Finance Technology;” whose authors say the White House “endorse[s] the notion that digital assets and blockchain technologies can revolutionize not just America’s financial system, but systems of ownership and governance economy-wide”

What could be more beautiful?

All of this is part of a greater, concerted effort around the world to reshape and rewire global finance. In April, Matthew Blake, Managing Director at the WEF, wrote, “The financial system is rebooting. Stakeholders must adapt….”

“Those that adapt their operating models, policy frameworks, and risk assumptions to this new reality will help shape a system that is fit for a multipolar world. Those that cling to outdated assumptions risk being overtaken.”

The beauty is that it does not require a single global currency. It only requires a single system to manage them all.One ring to rule them all.

“The key is ensuring what is known as the singleness of money — their full interchangeability at par value — to ensure frictionless, trusted interactions, just as we expect today.

“Ultimately, we need to stop thinking about these three forms of money as competitors. As the system matures, it’s clear they will co-exist as three corners of a new digital money triangle, just as different forms of money do today….

This will allow us, for example, to program a tokenized deposit to move funds instantly to the merchant at the moment goods are delivered, while allowing the delivery driver to simultaneously receive their fee in a stablecoin of their choosing, and programmatically pay what is owed to the tax office in the form of a CBDC in real-time, all while eliminating the need for manual reconciliation.”

If only we could develop an AI smart enough to mastermind the integration of all these “tokenized” assets you can hold in your iWallet. What could possibly go wrong after that?

Take it from Lily

A beautiful young woman who goes just by the name “Lily” writes with an equally beautiful mind. She publishes a Substack site of her own essays, and her latest eloquently described the system that the likes of Larry the Fink are creating and its final assault on humanity. Here’s a short excerpt:

The endpoint of this trajectory is a system that the Chinese government has been building openly for two decades and that Western governments have been building covertly for one. It is sometimes called a social credit system; the term is accurate but underplayed. A more honest description is a real-time behavioural enforcement architecture, in which every citizen carries a digital identity that is required for every economic and civic transaction, and in which that identity can be downgraded, restricted, or revoked by an administrative decision against which there is no meaningful appeal.

The digital pound, the digital euro, the digital dollar, the central bank digital currencies whose rollouts are at various stages of pilot in every G7 country, are the financial leg of this architecture. The digital identity wallets, the verified vaccination records, the Online Safety Act takedown powers, the age verification systems, the AI-mediated content moderation regimes, the predictive policing algorithms, are the rest of it. None of these systems, in isolation, looks especially threatening to a citizen accustomed to producing his passport at the airport. The threat is in the integration. When all of these systems are wired to the same identity, the same wallet, and the same compliance score, the resulting apparatus is the most comprehensive instrument of social control ever constructed, and it will not be staffed by men who have read Solzhenitsyn.

The Christian who reads his New Testament with attention will find, in the thirteenth chapter of the Revelation to John, an image of a system in which no one may buy or sell without a mark, and an injunction to refuse the mark whatever the cost. Devout readers have been arguing for two thousand years about what literal form the mark might take, and modern Christians who interpret current events through the apocalyptic frame have a long history of premature certainty.

But one need not commit to any particular eschatological reading to recognise that a financial-administrative architecture that conditions the right to buy and sell on continuous behavioural compliance is, on any moral reading, a structure that the Christian conscience is bound to resist. The bishops, who once would have understood this without prompting, are mostly silent. The silence is, itself, a fact about which institutions have been captured.

So, it may not be a single digital currency as many have been thinking, but a single digital system that transacts all forms of digital money and assets from multiple nations—even hard assets like gold where the hard asset is owned and stored by an entity that makes it possible to fractionally buy gold in your iWallet as an exchange traded fund (ETF). That’s the tokenization aspect. Then you use you iWallet via the system to transact your ETF owners in gold, your CBDC dollars, your ETF ownership in bonds, your euros, etc. in trade.

If you want to read an astute description of the deep state that comes from a broad understanding of history so that it leans on precious few conspiratorial guesses, I recommend you read Lily’s whole essay. The title may not be so eloquent, but the rest of the essay certainly is, as is the equally interesting and apocalyptic essay Lily has tagged on to the end of it:

“What the Fuck is Going On? - An overview of all that matters - Everything else is theater.”